Algorithmic Vision

Algorithmic vision is the lens through which audiovisual archives become computable and explorable as immersive environments.

To describe how computational methods unlock the richness embedded in audiovisual (AV) archives, we adopt the notion of algorithmic vision (Uliasz, 2021). This term goes beyond the analysis of images alone: it refers to a broader epistemological regime in which moving images, sound, gesture, rhythm, and speech are all made machine-readable and restructured as operative images—data that can be acted upon computationally.

Under algorithmic vision, the archive is not seen as a fixed, curated set of narratives, but as a latent field of patterns, features, and entities that can be surfaced and transformed into numerical form—what we describe as a feature space. This space becomes the foundation for spatial mappings that are ultimately traversed in immersive systems. In this sense, algorithmic vision is both a way of seeing and a way of interpreting the archive: to look at it through a computational lens.

As Rodríguez Ortega (2022) notes, algorithmic vision emerges from the ontological transformation that cultural objects undergo through digitisation or direct digital production. Once digitised, archives can be reconceived as computational archives (Kenderdine, 2021): collections that can be enriched and restructured through two complementary processes—datafication (the extraction of information and metadata from media) and vectorisation (the translation of media features into numerical vectors). These processes operate at different levels but share a common effect: they recast AV archives into new epistemic objects, transforming not only how they can be accessed, but how they can be perceived, structured, and made meaningful.

Datafication

Datafication is the process of sculpting audiovisual (AV) archives into machine-readable forms, surfacing latent structures and semantic layers that are otherwise invisible to the naked eye.

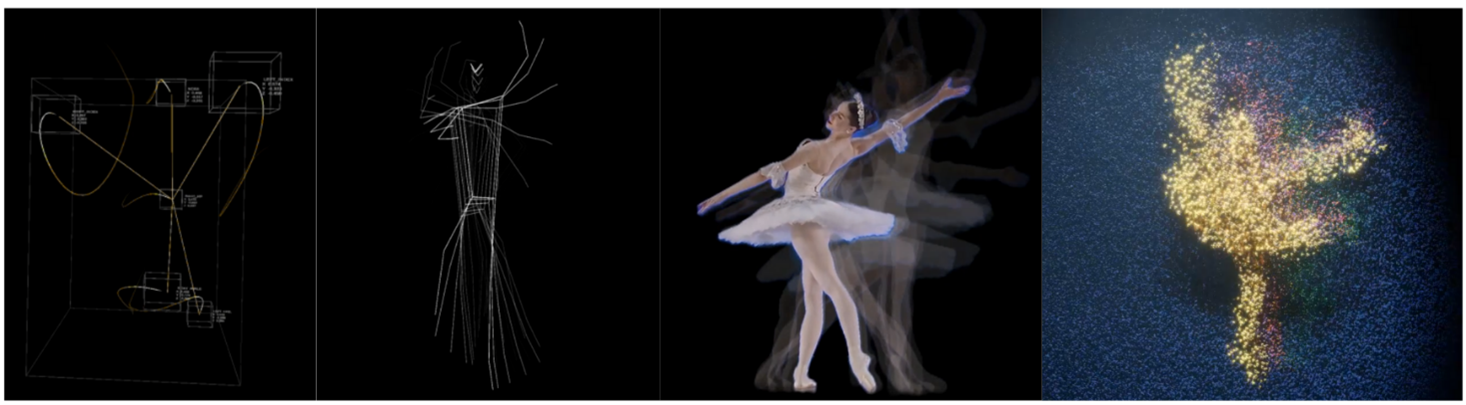

Rather than creating new content, datafication extracts information already embedded in the AV material and renders it computationally accessible. In this sense, it acts like a sculptor’s chisel: carving through the flow of images and sounds to reveal patterns, fragments, and features that can serve as new entry points into the archive. In Swiss Echoes, for instance, datafication extracts named locations from the spoken layers of the RTS archive, while in the Motion Visualisations in Dancing through Time, continuous features such as dancers’ body positions and depthmaps are surfaced from the original footage of the Prix de Lausanne collection.

This process can take different forms. Some methods generate continuous perceptual layers, such as transcripts, depth maps, or motion traces, which provide alternative ways of perceiving the same temporal events. Others produce discrete semantic extractions, such as named entities or indexed poses, which fragment the archive into new, recombinable units of meaning. Together, these modes of datafication reshape the ontology of AV archives: from bounded editorial works into modular, computationally parsed material.

The result is a reconfigured archive that supports novel pathways of access and interpretation. Instead of encountering a collection only as authored programmes or performances, visitors can explore it through semantically enriched fragments—navigating by place names in Swiss Echoes, by movement traces in Motion Visualisations, or by bodily poses in Posing at the Olympics. Datafication thus reframes the archive as a field of potentialities: a feature space from which new architectures of participation can emerge.

Vectorisation

Vectorisation transforms audiovisual material into numerical vectors, making cultural objects mathematically comparable within a continuous feature space.

Whereas datafication surfaces embedded information such as transcripts or poses, vectorisation fully projects AV material into numerical form. Each object—whether a video clip, a human pose, or a sound fragment—is encoded as a point in a high-dimensional space. This process does not simply describe media; it reconstitutes it as structured data that can be searched, clustered, and related according to mathematical measures of similarity.

The epistemological consequence of this operation lies in commensurability. Once expressed as vectors, previously distinct archival items become comparable across categories and even across collections. Meaning shifts from being defined by curatorial metadata (titles, dates, genres) to emerging from relative proximity in vector space. In this sense, vectorisation introduces a new archival logic: one grounded in continuous relations rather than discrete classifications.

Vectorisation can be achieved in two main ways. Explicit vectorisation involves manually defining interpretable attributes—such as joint angles or distances in Posing at the Olympics—and encoding them as feature vectors. Learned vectorisation, by contrast, relies on machine learning models that generate abstract embeddings, capturing latent patterns not directly accessible through manual description. Both methods recast archives into a new epistemic form: a computationally sculpted feature space where cultural objects are linked through measurable affinities.

This transformation reframes the archive from a static repository of authored works into a dynamic landscape of relations. In Posing at the Olympics, visitors can then access the feature space of vectorised human poses through the virtual puppet interface, essentially casting themselves as a point in the obtained latent space.

High-dimensional vector spaces, however, can also be visualised in two or three dimensions, through dimensionality reduction, offering audiences not a taxonomy of categories but an experiential map of cultural proximities that they can inhabit in an immersive system. It is to this computational mapping operation that we now turn.

Computational mappings

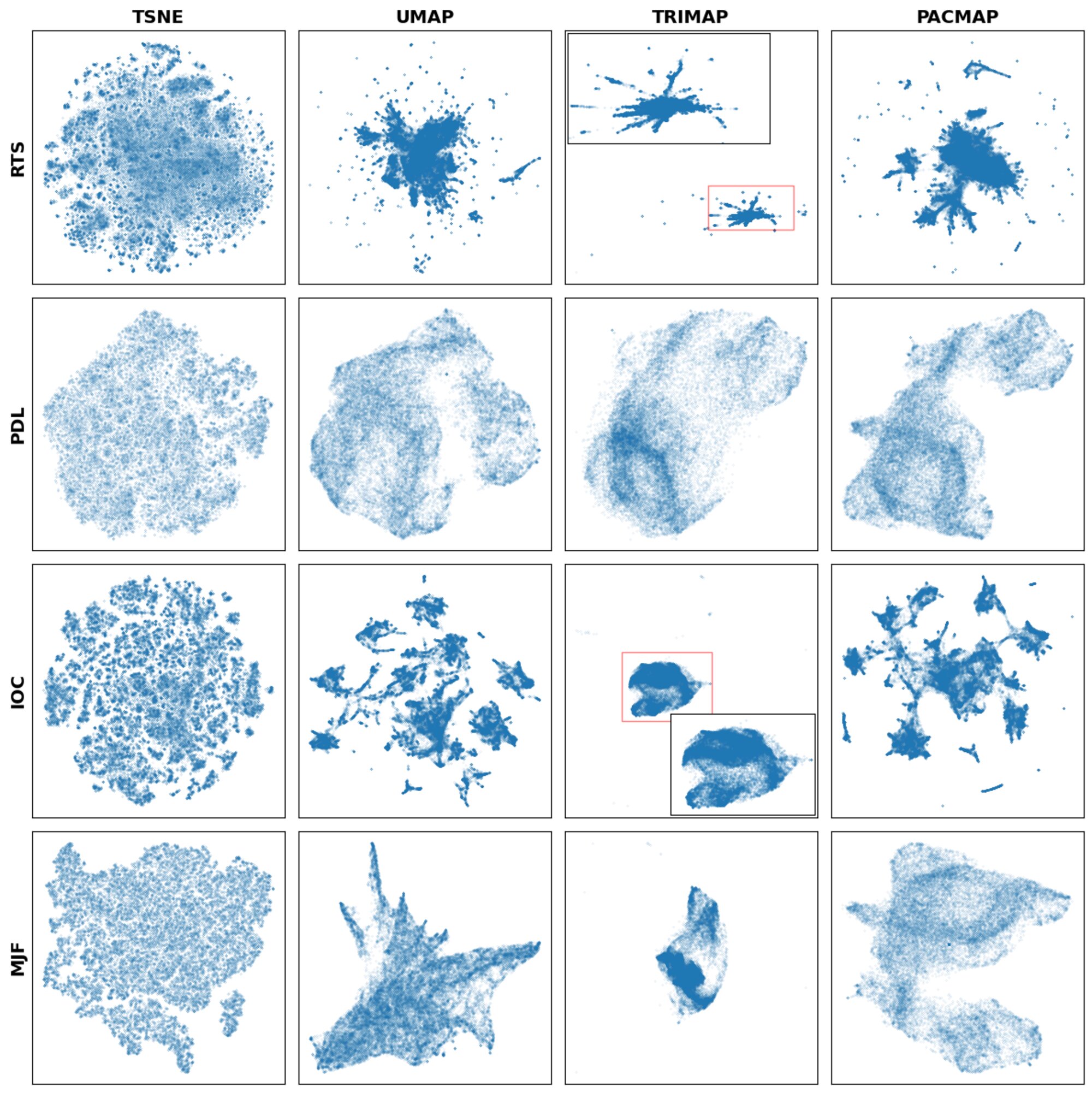

Dimensionality reduction (DR) techniques translate high-dimensional feature spaces into human-interpretable forms, enabling archives to be visualised, navigated, and even inhabited.

Once audiovisual materials have been vectorised, they exist as points in high-dimensional feature spaces—mathematically precise, but impossible to perceive directly. Computational mappings address this challenge. DR algorithms such as t-SNE or UMAP project these spaces into two or three dimensions, preserving as much of the original structure as possible while making emergent relationships visible. This operation renders latent structures—clusters, proximities, and continuities—perceptible to human observers. What may be hidden in metadata becomes legible as spatial patterns, which can be explored visually or embodied within immersive systems such as Panorama+.

Like sculpture, dimensionality reduction is never a neutral process. Each algorithm emphasises certain structures (local or global, clusters or outliers) while suppressing others. The resulting projection is therefore both computational and curatorial: it reveals one possible view of the archive, shaped by design choices, algorithmic parameters, and data properties.

When actualised in immersive installations, computational mappings of any kind—whether the geolocation of spoken places in Swiss Echoes, the projection of poses in Posing at the Olympics, or dimensionality-reduced latent spaces in Galaxy of Videos—undergo a shift. They move from being representations to be observed into spaces to be inhabited. Visitors step into these mappings, navigating them not only visually but bodily, discovering relations through orientation, proximity, and movement. In this way, computational mappings transform abstract data structures into architectures of participation, where archives are experienced as spatial environments rather than static catalogues. This transition lays the ground for a typology of narrative visualisations, through which we can differentiate and analyse the various ways AV archives are computationally sculpted into embodied forms of access.

Narrative Visualisation Typology

To make sense of how archives are transformed through computational sculpting, we propose a typology of narrative visualisations drawn from the installations developed in this research. The typology highlights how algorithmic processes, design decisions, and curatorial choices converge to render audiovisual archives explorable, meaningful, and situated within embodied experience.

Rather than measuring installations on a single scale, the typology distinguishes between qualitatively different strategies along two conceptual axes:

- Mode of Spatial Mapping: How is the archive computationally structured as a space for exploration?

- Mode of Contextualisation: What frame of reference situates the archive for the visitor?

Modes of Spatial Mapping

This first axis identifies the computational logic that shapes exploration:

- Query-based mapping – exploration is driven by visitor input, retrieving items that match a gesture or prompt (Posing at the Olympics).

- Perceptual mapping – the archive is re-presented through alternative computational renderings, such as motion traces or silhouettes (Motion Visualisations).

- Semantic mapping – archival materials are organised through interpretable metadata such as chronology or geography (Dancing through Time, Swiss Echoes).

- Emergent mapping – the archive is spatialised without pre-defined categories, with structures arising from the projection of the data itself (BiographScope, Galaxy of Videos).

Some mappings structure interaction without defining continuous space (e.g., query similarity, perceptual layers). Others produce navigable architectures, where the archive is experienced as an inhabitable environment.

Modes of Contextualisation

The second axis concerns how the mapping is anchored in relation to interpretative frames:

- Contextualised – structured within the archive’s own metadata (e.g., the chronological timeline in Dancing through Time).

- Recontextualised – situated within an external frame such as geography (Swiss Echoes).

- Decontextualised – presented without explicit external anchors, allowing space to emerge from the mapping itself (BiographScope, Galaxy of Videos).

Contextualised and recontextualised mappings rely on external reference frames, while decontextualised mappings generate their own.

Mapping the Design Space

This typology underscores that access to AV archives is shaped as much by computational operations as by interface design. By classifying different strategies, we can see how archives become activated as embodied architectures of participation—spaces that visitors do not just observe but inhabit, navigating them through physical engagement and discovery.

| Installation | Mapping Type | Contextualisation Type |

|---|---|---|

| Dancing Through Time | Semantic | Contextualised |

| BiographScope | Emergent | Decontextualised |

| Motion Visualisations | Perceptual | Recontextualised |

| Swiss Echoes | Semantic | Recontextualised |

| Posing at the Olympics | Query-based | Decontextualised |

| Galaxy of Videos | Emergent | Decontextualised |

This typology functions much like an annotated portfolio: a design space of possible strategies that future projects can probe, combine, or extend.